Microsoft Corp. today issued software updates to plug more than 70 security holes in its Windows operating systems and related products, including multiple zero-day vulnerabilities currently being exploited in the wild.

Six of the flaws fixed today earned Microsoft’s “critical” rating, meaning malware or miscreants could use them to install software on a vulnerable Windows system without any help from users.

Last month, Microsoft acknowledged a series of zero-day vulnerabilities in a variety of Microsoft products that were discovered and exploited in-the-wild attacks. They were assigned a single placeholder designation of CVE-2023-36884.

Satnam Narang, senior staff research engineer at Tenable, said the August patch batch addresses CVE-2023-36884, which involves bypassing the Windows Search Security feature.

“Microsoft also released ADV230003, a defense-in-depth update designed to stop the attack chain associated that leads to the exploitation of this CVE,” Narang said. “Given that this has already been successfully exploited in the wild as a zero-day, organizations should prioritize patching this vulnerability and applying the defense-in-depth update as soon as possible.”

Redmond patched another flaw that is already seeing active attacks — CVE-2023-38180 — a weakness in .NET and Visual Studio that leads to a denial-of-service condition on vulnerable servers.

“Although the attacker would need to be on the same network as the target system, this vulnerability does not require the attacker to have acquired user privileges,” on the target system, wrote Nikolas Cemerikic, cyber security engineer at Immersive Labs.

Narang said the software giant also patched six vulnerabilities in Microsoft Exchange Server, including CVE-2023-21709, an elevation of privilege flaw that was assigned a CVSSv3 (threat) score of 9.8 out of a possible 10, even though Microsoft rates it as an important flaw, not critical.

“An unauthenticated attacker could exploit this vulnerability by conducting a brute-force attack against valid user accounts,” Narang said. “Despite the high rating, the belief is that brute-force attacks won’t be successful against accounts with strong passwords. However, if weak passwords are in use, this would make brute-force attempts more successful. The remaining five vulnerabilities range from a spoofing flaw and multiple remote code execution bugs, though the most severe of the bunch also require credentials for a valid account.”

Experts at security firm Automox called attention to CVE-2023-36910, a remote code execution bug in the Microsoft Message Queuing service that can be exploited remotely and without privileges to execute code on vulnerable Windows 10, 11 and Server 2008-2022 systems. Microsoft says it considers this vulnerability “less likely” to be exploited, and Automox says while the message queuing service is not enabled by default in Windows and is less common today, any device with it enabled is at critical risk.

Separately, Adobe has issued a critical security update for Acrobat and Reader that resolves at least 30 security vulnerabilities in those products. Adobe said it is not aware of any exploits in the wild targeting these flaws. The company also issued security updates for Adobe Commerce and Adobe Dimension.

If you experience glitches or problems installing any of these patches this month, please consider leaving a comment about it below; there’s a fair chance other readers have experienced the same and may chime in here with useful tips.

Additional reading:

-SANS Internet Storm Center listing of each Microsoft vulnerability patched today, indexed by severity and affected component.

–AskWoody.com, which keeps tabs on any developing problems related to the availability or installation of these updates.

WormGPT, a private new chatbot service advertised as a way to use Artificial Intelligence (AI) to write malicious software without all the pesky prohibitions on such activity enforced by the likes of ChatGPT and Google Bard, has started adding restrictions of its own on how the service can be used. Faced with customers trying to use WormGPT to create ransomware and phishing scams, the 23-year-old Portuguese programmer who created the project now says his service is slowly morphing into “a more controlled environment.”

Image: SlashNext.com.

The large language models (LLMs) made by ChatGPT parent OpenAI or Google or Microsoft all have various safety measures designed to prevent people from abusing them for nefarious purposes — such as creating malware or hate speech. In contrast, WormGPT has promoted itself as a new, uncensored LLM that was created specifically for cybercrime activities.

WormGPT was initially sold exclusively on HackForums, a sprawling, English-language community that has long featured a bustling marketplace for cybercrime tools and services. WormGPT licenses are sold for prices ranging from 500 to 5,000 Euro.

“Introducing my newest creation, ‘WormGPT,’ wrote “Last,” the handle chosen by the HackForums user who is selling the service. “This project aims to provide an alternative to ChatGPT, one that lets you do all sorts of illegal stuff and easily sell it online in the future. Everything blackhat related that you can think of can be done with WormGPT, allowing anyone access to malicious activity without ever leaving the comfort of their home.”

In July, an AI-based security firm called SlashNext analyzed WormGPT and asked it to create a “business email compromise” (BEC) phishing lure that could be used to trick employees into paying a fake invoice.

“The results were unsettling,” SlashNext’s Daniel Kelley wrote. “WormGPT produced an email that was not only remarkably persuasive but also strategically cunning, showcasing its potential for sophisticated phishing and BEC attacks.”

A review of Last’s posts on HackForums over the years shows this individual has extensive experience creating and using malicious software. In August 2022, Last posted a sales thread for “Arctic Stealer,” a data stealing trojan and keystroke logger that he sold there for many months.

“I’m very experienced with malwares,” Last wrote in a message to another HackForums user last year.

Last has also sold a modified version of the information stealer DCRat, as well as an obfuscation service marketed to malicious coders who sell their creations and wish to insulate them from being modified or copied by customers.

Shortly after joining the forum in early 2021, Last told several different Hackforums users his name was Rafael and that he was from Portugal. HackForums has a feature that allows anyone willing to take the time to dig through a user’s postings to learn when and if that user was previously tied to another account.

That account tracing feature reveals that while Last has used many pseudonyms over the years, he originally used the nickname “ruiunashackers.” The first search result in Google for that unique nickname brings up a TikTok account with the same moniker, and that TikTok account says it is associated with an Instagram account for a Rafael Morais from Porto, a coastal city in northwest Portugal.

Reached via Instagram and Telegram, Morais said he was happy to chat about WormGPT.

“You can ask me anything,” Morais said. “I’m an open book.”

Morais said he recently graduated from a polytechnic institute in Portugal, where he earned a degree in information technology. He said only about 30 to 35 percent of the work on WormGPT was his, and that other coders are contributing to the project. So far, he says, roughly 200 customers have paid to use the service.

“I don’t do this for money,” Morais explained. “It was basically a project I thought [was] interesting at the beginning and now I’m maintaining it just to help [the] community. We have updated a lot since the release, our model is now 5 or 6 times better in terms of learning and answer accuracy.”

WormGPT isn’t the only rogue ChatGPT clone advertised as friendly to malware writers and cybercriminals. According to SlashNext, one unsettling trend on the cybercrime forums is evident in discussion threads offering “jailbreaks” for interfaces like ChatGPT.

“These ‘jailbreaks’ are specialised prompts that are becoming increasingly common,” Kelley wrote. “They refer to carefully crafted inputs designed to manipulate interfaces like ChatGPT into generating output that might involve disclosing sensitive information, producing inappropriate content, or even executing harmful code. The proliferation of such practices underscores the rising challenges in maintaining AI security in the face of determined cybercriminals.”

Morais said they have been using the GPT-J 6B model since the service was launched, although he declined to discuss the source of the LLMs that power WormGPT. But he said the data set that informs WormGPT is enormous.

“Anyone that tests wormgpt can see that it has no difference from any other uncensored AI or even chatgpt with jailbreaks,” Morais explained. “The game changer is that our dataset [library] is big.”

Morais said he began working on computers at age 13, and soon started exploring security vulnerabilities and the possibility of making a living by finding and reporting them to software vendors.

“My story began in 2013 with some greyhat activies, never anything blackhat tho, mostly bugbounty,” he said. “In 2015, my love for coding started, learning c# and more .net programming languages. In 2017 I’ve started using many hacking forums because I have had some problems home (in terms of money) so I had to help my parents with money… started selling a few products (not blackhat yet) and in 2019 I started turning blackhat. Until a few months ago I was still selling blackhat products but now with wormgpt I see a bright future and have decided to start my transition into whitehat again.”

WormGPT sells licenses via a dedicated channel on Telegram, and the channel recently lamented that media coverage of WormGPT so far has painted the service in an unfairly negative light.

“We are uncensored, not blackhat!” the WormGPT channel announced at the end of July. “From the beginning, the media has portrayed us as a malicious LLM (Language Model), when all we did was use the name ‘blackhatgpt’ for our Telegram channel as a meme. We encourage researchers to test our tool and provide feedback to determine if it is as bad as the media is portraying it to the world.”

It turns out, when you advertise an online service for doing bad things, people tend to show up with the intention of doing bad things with it. WormGPT’s front man Last seems to have acknowledged this at the service’s initial launch, which included the disclaimer, “We are not responsible if you use this tool for doing bad stuff.”

But lately, Morais said, WormGPT has been forced to add certain guardrails of its own.

“We have prohibited some subjects on WormGPT itself,” Morais said. “Anything related to murders, drug traffic, kidnapping, child porn, ransomwares, financial crime. We are working on blocking BEC too, at the moment it is still possible but most of the times it will be incomplete because we already added some limitations. Our plan is to have WormGPT marked as an uncensored AI, not blackhat. In the last weeks we have been blocking some subjects from being discussed on WormGPT.”

Still, Last has continued to state on HackForums — and more recently on the far more serious cybercrime forum Exploit — that WormGPT will quite happily create malware capable of infecting a computer and going “fully undetectable” (FUD) by virtually all of the major antivirus makers (AVs).

“You can easily buy WormGPT and ask it for a Rust malware script and it will 99% sure be FUD against most AVs,” Last told a forum denizen in late July.

Asked to list some of the legitimate or what he called “white hat” uses for WormGPT, Morais said his service offers reliable code, unlimited characters, and accurate, quick answers.

“We used WormGPT to fix some issues on our website related to possible sql problems and exploits,” he explained. “You can use WormGPT to create firewalls, manage iptables, analyze network, code blockers, math, anything.”

Morais said he wants WormGPT to become a positive influence on the security community, not a destructive one, and that he’s actively trying to steer the project in that direction. The original HackForums thread pimping WormGPT as a malware writer’s best friend has since been deleted, and the service is now advertised as “WormGPT – Best GPT Alternative Without Limits — Privacy Focused.”

“We have a few researchers using our wormgpt for whitehat stuff, that’s our main focus now, turning wormgpt into a good thing to [the] community,” he said.

It’s unclear yet whether Last’s customers share that view.

One frustrating aspect of email phishing is the frequency with which scammers fall back on tried-and-true methods that really have no business working these days. Like attaching a phishing email to a traditional, clean email message, or leveraging link redirects on LinkedIn, or abusing an encoding method that makes it easy to disguise booby-trapped Microsoft Windows files as relatively harmless documents.

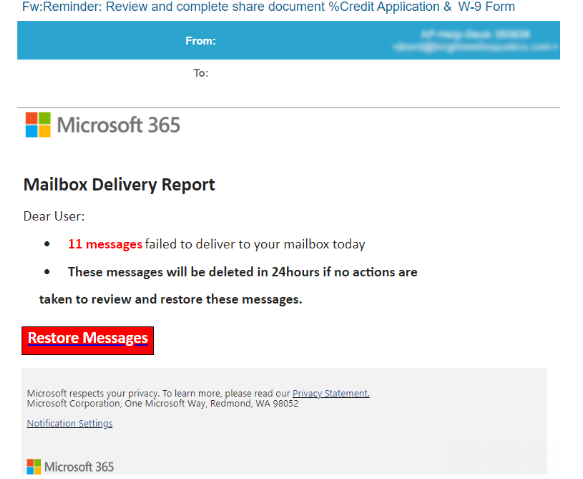

KrebsOnSecurity recently heard from a reader who was puzzled over an email he’d just received saying he needed to review and complete a supplied W-9 tax form. The missive was made to appear as if it were part of a mailbox delivery report from Microsoft 365 about messages that had failed to deliver.

The reader, who asked to remain anonymous, said the phishing message contained an attachment that appeared to have a file extension of “.pdf,” but something about it seemed off. For example, when he downloaded and tried to rename the file, the right arrow key on the keyboard moved his cursor to the left, and vice versa.

The file included in this phishing scam uses what’s known as a “right-to-left override” or RLO character. RLO is a special character within unicode — an encoding system that allows computers to exchange information regardless of the language used — that supports languages written from right to left, such as Arabic and Hebrew.

Look carefully at the screenshot below and you’ll notice that while Microsoft Windows says the file attached to the phishing message is named “lme.pdf,” the full filename is “fdp.eml” spelled backwards. In essence, this is a .eml file — an electronic mail format or email saved in plain text — masquerading as a .PDF file.

“The email came through Microsoft Office 365 with all the detections turned on and was not caught,” the reader continued. “When the same email is sent through Mimecast, Mimecast is smart enough to detect the encoding and it renames the attachment to ‘___fdp.eml.’ One would think Microsoft would have had plenty of time by now to address this.”

Indeed, KrebsOnSecurity first covered RLO-based phishing attacks back in 2011, and even then it wasn’t a new trick.

Opening the .eml file generates a rendering of a webpage that mimics an alert from Microsoft about wayward messages awaiting restoration to your inbox. Clicking on the “Restore Messages” link there bounces you through an open redirect on LinkedIn before forwarding to the phishing webpage.

As noted here last year, scammers have long taken advantage of a marketing feature on the business networking site which lets them create a LinkedIn.com link that bounces your browser to other websites, such as phishing pages that mimic top online brands (but chiefly Linkedin’s parent firm Microsoft).

The landing page after the LinkedIn redirect displays what appears to be an Office 365 login page, which is naturally a phishing website made to look like an official Microsoft Office property.

In summary, this phishing scam uses an old RLO trick to fool Microsoft Windows into thinking the attached file is something else, and when clicked the link uses an open redirect on a Microsoft-owned website (LinkedIn) to send people to a phishing page that spoofs Microsoft and tries to steal customer email credentials.

According to the latest figures from Check Point Software, Microsoft was by far the most impersonated brand for phishing scams in the second quarter of 2023, accounting for nearly 30 percent of all brand phishing attempts.

An unsolicited message that arrives with one of these .eml files as an attachment is more than likely to be a phishing lure. The best advice to sidestep phishing scams is to avoid clicking on links that arrive unbidden in emails, text messages and other mediums. Most phishing scams invoke a temporal element that warns of dire consequences should you fail to respond or act quickly.

If you’re unsure whether a message is legitimate, take a deep breath and visit the site or service in question manually — ideally, using a browser bookmark to avoid potential typosquatting sites.

Researchers say mobile malware purveyors have been abusing a bug in the Google Android platform that lets them sneak malicious code into mobile apps and evade security scanning tools. Google says it has updated its app malware detection mechanisms in response to the new research.

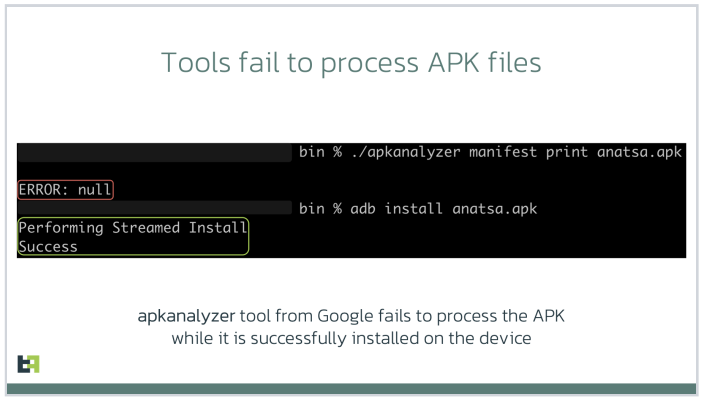

At issue is a mobile malware obfuscation method identified by researchers at ThreatFabric, a security firm based in Amsterdam. Aleksandr Eremin, a senior malware analyst at the company, told KrebsOnSecurity they recently encountered a number of mobile banking trojans abusing a bug present in all Android OS versions that involves corrupting components of an app so that its new evil bits will be ignored as invalid by popular mobile security scanning tools, while the app as a whole gets accepted as valid by Android OS and successfully installed.

“There is malware that is patching the .apk file [the app installation file], so that the platform is still treating it as valid and runs all the malicious actions it’s designed to do, while at the same time a lot of tools designed to unpack and decompile these apps fail to process the code,” Eremin explained.

Eremin said ThreatFabric has seen this malware obfuscation method used a few times in the past, but in April 2023 it started finding many more variants of known mobile malware families leveraging it for stealth. The company has since attributed this increase to a semi-automated malware-as-a-service offering in the cybercrime underground that will obfuscate or “crypt” malicious mobile apps for a fee.

Eremin said Google flagged their initial May 9, 2023 report as “high” severity. More recently, Google awarded them a $5,000 bug bounty, even though it did not technically classify their finding as a security vulnerability.

“This was a unique situation in which the reported issue was not classified as a vulnerability and did not impact the Android Open Source Project (AOSP), but did result in an update to our malware detection mechanisms for apps that might try to abuse this issue,” Google said in a written statement.

Google also acknowledged that some of the tools it makes available to developers — including APK Analyzer — currently fail to parse such malicious applications and treat them as invalid, while still allowing them to be installed on user devices.

“We are investigating possible fixes for developer tools and plan to update our documentation accordingly,” Google’s statement continued.

Image: ThreatFabric.

According to ThreatFabric, there are a few telltale signs that app analyzers can look for that may indicate a malicious app is abusing the weakness to masquerade as benign. For starters, they found that apps modified in this way have Android Manifest files that contain newer timestamps than the rest of the files in the software package.

More critically, the Manifest file itself will be changed so that the number of “strings” — plain text in the code, such as comments — specified as present in the app does match the actual number of strings in the software.

One of the mobile malware families known to be abusing this obfuscation method has been dubbed Anatsa, which is a sophisticated Android-based banking trojan that typically is disguised as a harmless application for managing files. Last month, ThreatFabric detailed how the crooks behind Anatsa will purchase older, abandoned file managing apps, or create their own and let the apps build up a considerable user base before updating them with malicious components.

ThreatFabric says Anatsa poses as PDF viewers and other file managing applications because these types of apps already have advanced permissions to remove or modify other files on the host device. The company estimates the people behind Anatsa have delivered more than 30,000 installations of their banking trojan via ongoing Google Play Store malware campaigns.

Google has come under fire in recent months for failing to more proactively police its Play Store for malicious apps, or for once-legitimate applications that later go rogue. This May 2023 story from Ars Technica about a formerly benign screen recording app that turned malicious after garnering 50,000 users notes that Google doesn’t comment when malware is discovered on its platform, beyond thanking the outside researchers who found it and saying the company removes malware as soon as it learns of it.

“The company has never explained what causes its own researchers and automated scanning process to miss malicious apps discovered by outsiders,” Ars’ Dan Goodin wrote. “Google has also been reluctant to actively notify Play users once it learns they were infected by apps promoted and made available by its own service.”

The Ars story mentions one potentially positive change by Google of late: A preventive measure available in Android versions 11 and higher that implements “app hibernation,” which puts apps that have been dormant into a hibernation state that removes their previously granted runtime permissions.

Firefox